Abstract

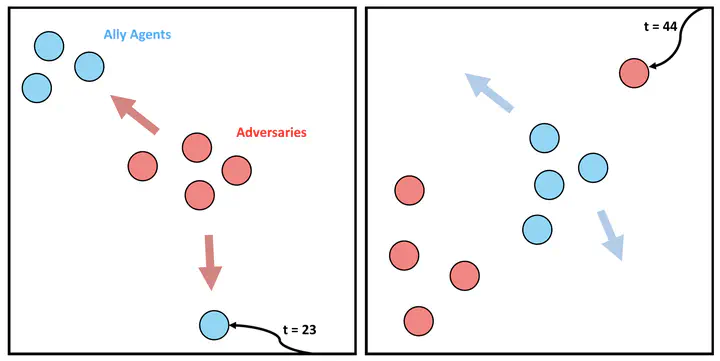

Multi-agent reinforcement learning has demonstrated significant potential in addressing complex cooperative tasks across various real-world applications. However, existing MARL approaches often rely on the restrictive assumption that the number of entities (e.g., agents, obstacles) remains constant between training and inference. This overlooks scenarios where entities are dynamically removed or added during the inference trajectory – a common occurrence in real-world environments like search and rescue missions and dynamic combat situations. In this paper, we tackle the challenge of intra-trajectory dynamic entity composition under zero-shot out-of-domain (OOD) generalization, where such dynamic changes cannot be anticipated beforehand. Our empirical studies reveal that existing MARL methods suffer significant performance degradation and increased uncertainty in these scenarios. In response, we propose FlickerFusion, a novel OOD generalization method that acts as a universally applicable augmentation technique for MARL backbone methods. Our results show that FlickerFusion not only achieves superior inference rewards but also uniquely reduces uncertainty vis-à-vis the backbone, compared to existing methods. For standardized evaluation, we introduce MPEv2, an enhanced version of Multi Particle Environments (MPE), consisting of 12 benchmarks. Benchmarks, implementations, and trained models are organized and open-sourced at this http URL, accompanied by ample demo video renderings.